AI and Privacy: Why You Don’t Need a Supercomputer to Stay Safe (The Trishul Framework)

As I’ve started working on implementing AI to help excel my own life, I realized the very first thing I needed to address was privacy. I’ve been talking to a few people, and there’s this common notion that if you want to run AI privately, you absolutely must have high-end hardware to run local models.

But it got me thinking: do we really need the same level of privacy for everything we do?

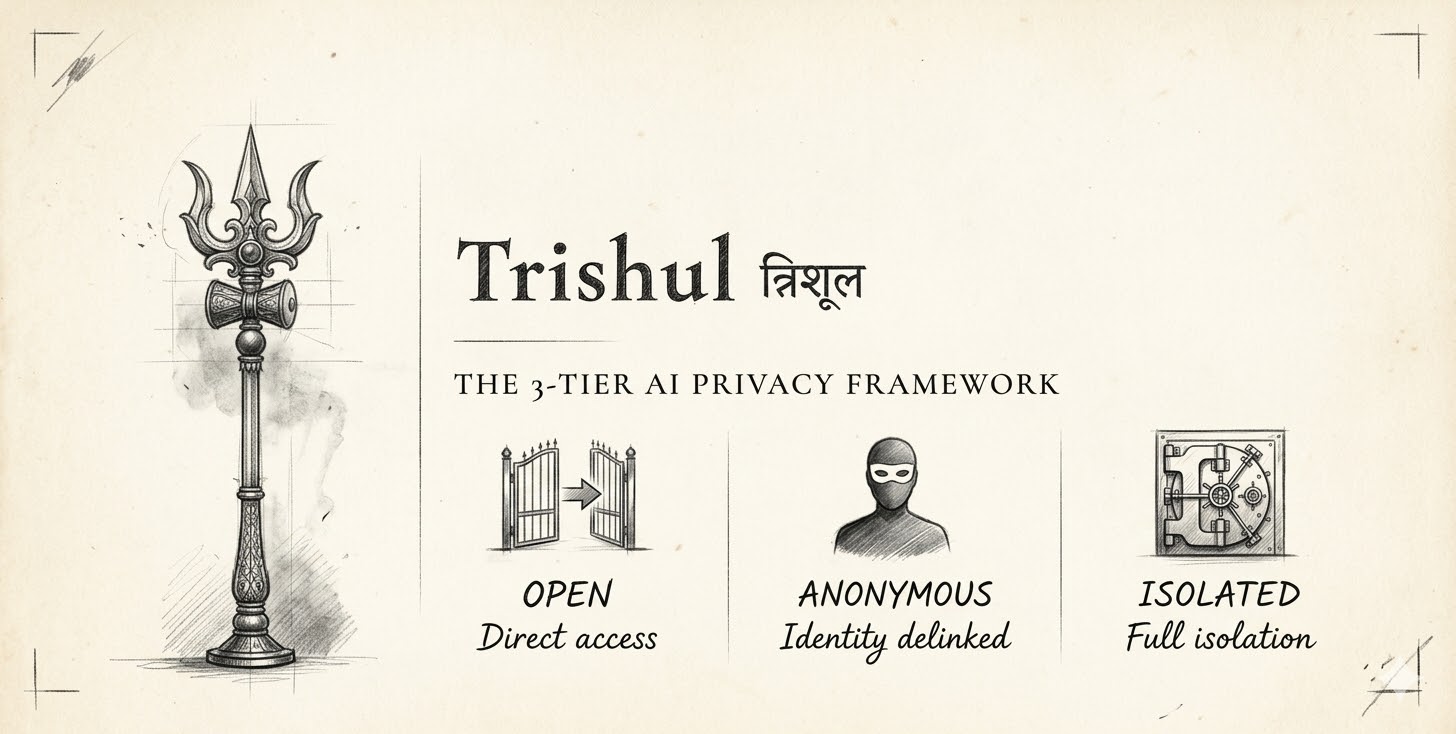

The truth is, we don’t. That’s why I’ve developed a three-tier privacy framework that I’ve been using for the last few days. I call it Trishul (Shiva’s Trident), because it has three prongs, providing three different levels of protection. This isn’t code; it’s a philosophical approach to how we handle our data while using AI.

The core problem we’re trying to solve is identity. Most apps tie everything you do to who you are, building a persona of you in the background. We want to break that link.

Here is how the three tiers work:

Tier 3: Open Use (General Research)

This is for your everyday tasks—general research, basic learning, or writing simple code. In these cases, it doesn’t really matter if the information is tied to you.

- How to use it: Just use the tools provided by AI companies as they are—the ChatGPT portal, Gemini, or their CLIs.

- The Privacy Reality: In Tier 3, nothing is “safe.” Everything you pass goes to the provider, and they can use it to build a history or a persona around you. But for general info, that’s often an acceptable trade-off.

Tier 2: Anonymous Use (Decoupling Identity)

This tier is where we solve the identity linkage. You want the results, but you don’t want the provider (like Google or Anthropic) to know it was you asking the question.

- How to use it: Use a proxy provider. I personally use Open Router. When you send data through a proxy, they pass the context to the AI model in the back end without sending your identity.

- Redaction: If you’re worried about details within the text, you can use personal information redaction tools to scrub names or ID numbers. I don’t personally use these much because if something is that sensitive, I move it to Tier 1.

- Best for: Things like competitive business analysis, where the search itself shouldn’t be tied back to your company or identity.

Tier 1: Full Isolation (The “Vault”)

There is a huge misconception that for full privacy, you need to spend thousands on hardware to run models locally. While that works, there’s a much easier path: AWS Bedrock.

- How to use it: AWS Bedrock gives you access to top-tier models, but with a very strong contract. Your data stays within the boundaries of your AWS account; it isn’t sent back to the AI providers for training.

- Why not local hardware? Unless you have a massive budget, you’ll eventually run out of “juice” as models get bigger and better. Investing in expensive hardware can be a losing game. Most organizations already trust AWS with their data because they are HIPAA and SOC compliant. I’d rather trust one solid infrastructure provider than five different AI startups.

- Best for: Anything deeply personal or highly confidential.

My Personal Setup

To give you an idea of how this looks in practice:

- For general research: I go straight to the Gemini or Anthropic apps (Tier 3).

- For coding: I use a coding agent connected through Open Router (Tier 2). There’s usually no personal data in my code, but I prefer to keep my identity decoupled.

- For sensitive work: I use AWS Bedrock (Tier 1).

The bottom line is that privacy shouldn’t be “all or nothing.” You can decide the level of protection based on the task at hand.

What do you guys think? Do you have suggestions or things I should add? I’ve put the documentation up on GitHub, so feel free to check out the repo and start a discussion there.

I’m still exploring how to fully integrate AI into my day-to-day life, and I’ll share more as I go. Talk soon!